cfbtcman (OP)

Member

Offline Offline

Activity: 264

Merit: 16

|

|

November 17, 2018, 03:25:57 AM |

|

Today i read a tweet from Roger Ver that says bitcoin cant scale, LN dont work, Craig Wright says the same...

What about this:

- Adjust actual Bitcoin blocksize for more MB (like BCH done)

- Dinamically Split blocksize data in many parts like BTC Nodes Type A, B, C, etc (related to the number of total nodes running)

Example:

If we double the blocksize, we double the Type of nodes:

More nodes = more split types = bigger blocksize

This way we could save a lot of diskspace in nodes network, its sufficient that in a group of 1000 nodes, 500 save backup of 50% of the data (Type A node) and the other 50% can save backup of the other 50% of the data (Type B node), its a waste of space all the nodes in the network save all information repeated thousands of times.

Then each node Type A can "ask" to other node Type B the information is trying to find and vice-versa and gets the information anyway dont wasting so much space and solving the problems of centralized big block sizes data full nodes that no common mortal can manage like will happen in BCH with the use of big blocksize like BCHSV going to 128MB and planning keep scaling.

What you guys think about this?

|

|

|

|

|

|

|

|

Each block is stacked on top of the previous one. Adding another block to the top makes all lower blocks more difficult to remove: there is more "weight" above each block. A transaction in a block 6 blocks deep (6 confirmations) will be very difficult to remove.

|

|

|

Advertised sites are not endorsed by the Bitcoin Forum. They may be unsafe, untrustworthy, or illegal in your jurisdiction.

|

ABCbits

Legendary

Offline Offline

Activity: 2870

Merit: 7491

Crypto Swap Exchange

|

|

November 17, 2018, 03:35:00 AM |

|

This has been discussed many times and unfortunately, majority of Bitcoiner would disagree since increasing block size would increase the cost of running full nodes. Split block data to many different nodes type is called Sharding and already proposed many times such as BlockReduce: Scaling Blockchain to human commerceBesides, IMO sharding open lots of attack vector, increase development complexity and requiring more trust. Additionally, LN help bitcoin scaling a lot, even though it's not perfect solution. Those who said that clearly don't understand how LN works and it's potential. Lots of cryptocurrency including Ethereum are preparing 2nd-layer/off-chain as scaling solution because they know it's good scaling solution. |

|

|

|

cfbtcman (OP)

Member

Offline Offline

Activity: 264

Merit: 16

|

|

November 17, 2018, 04:26:02 AM |

|

This has been discussed many times and unfortunately, majority of Bitcoiner would disagree since increasing block size would increase the cost of running full nodes. Split block data to many different nodes type is called Sharding and already proposed many times such as BlockReduce: Scaling Blockchain to human commerceBesides, IMO sharding open lots of attack vector, increase development complexity and requiring more trust. Additionally, LN help bitcoin scaling a lot, even though it's not perfect solution. Those who said that clearly don't understand how LN works and it's potential. Lots of cryptocurrency including Ethereum are preparing 2nd-layer/off-chain as scaling solution because they know it's good scaling solution. This idea dont require to upgrade blocksize, you can split nodes even with 2MB block, you could save a lot of space in nodes and reduce the cost of running full nodes, but, if you upgrade blocksize and at same time split the nodes in a way like dinamically P2P data, the cost of running nodes will be the same as before. Even with LN there will be a huge of data space wasted in all the nodes repeating informations and a pain in the ass to install and run a full node. If today there is 9000 nodes and if tomorrow another 9000 are added to network, dont make sense to repeat the information so many times, its a waste of space. Im not 100% inside how LN works, but what happens if i switch off my LN node with all the channels inside forever, there is loss of bitcoins or not? |

|

|

|

|

pooya87

Legendary

Offline Offline

Activity: 3444

Merit: 10558

|

|

November 17, 2018, 04:55:54 AM |

|

the problem with increasing block size as the only solution for scaling is that no matter how much you increase it, it will not be enough for a global payment system which has to process a lot of transactions per second.

you will eventually need a solution that doesn't need block space for increasing availability. and that is the second layer solution like lightning network.

|

.

.BLACKJACK ♠ FUN. | | | ███▄██████

██████████████▀

████████████

█████████████████

████████████████▄▄

░█████████████▀░▀▀

██████████████████

░██████████████

█████████████████▄

░██████████████▀

████████████

███████████████░██

██████████ | | CRYPTO CASINO &

SPORTS BETTING | | │ | | │ | ▄▄███████▄▄

▄███████████████▄

███████████████████

█████████████████████

███████████████████████

█████████████████████████

█████████████████████████

█████████████████████████

███████████████████████

█████████████████████

███████████████████

▀███████████████▀

███████████████████ | | .

|

|

|

|

cfbtcman (OP)

Member

Offline Offline

Activity: 264

Merit: 16

|

|

November 17, 2018, 05:21:07 AM |

|

the problem with increasing block size as the only solution for scaling is that no matter how much you increase it, it will not be enough for a global payment system which has to process a lot of transactions per second.

you will eventually need a solution that doesn't need block space for increasing availability. and that is the second layer solution like lightning network.

I understand your point of view, but i think if all the world uses LN the 2MB blocksize would not be sufficient to do the ONCHAIN transactions the people would want to do from blockchain to off-chain and vice-versa, so, block size needs to upgrade sooner or later, it would have been much better for everyone if it was done without BTC/BCH split, LN can run over BCH too, so... But i have a new idea, my purpose can be implemented without changing the block size, only to save data space in nodes and i think it can be possible to apply without hardforking, for example, we can create a new version node that stays 100% compatible with actual core and splits/communicates with the new node types to save disk space and act as normal node to comunicate with actual nodes. That would be something very nice! Some expert could help to implement it? |

|

|

|

|

pooya87

Legendary

Offline Offline

Activity: 3444

Merit: 10558

|

|

November 17, 2018, 05:37:31 AM |

|

I understand your point of view, but i think if all the world uses LN the 2MB blocksize would not be sufficient to do the ONCHAIN transactions the people would want to do from blockchain to off-chain and vice-versa, so, block size needs to upgrade sooner or later,

i completely agree! and i think everyone already knows that LN relies on on-chain scaling. it is called second layer for a reason, it is a layer that comes on another layer which needs to be functioning well. it would have been much better for everyone if it was done without BTC/BCH split, LN can run over BCH too, so...

that fork-off had nothing to do with scaling in my opinion, it was more like an attempt to take over and make money. not to mention that the whole motto of BCH is that the only way for scaling is on-chain scaling. so no, LN or any other second layer solution name it may be called will not "run over BCH" ever. 500 save backup of 50% of the data (Type A node) and the other 50% can save backup of the other 50% of the data (Type B node)

so lets say there is a transaction Tx1 and it is in the other half of the "data" in node Type B. and say i run node Type A. if someone pays me by spending Tx1, i do not have it in my database (blockchain) so how can i know it is a valid translation? am i supposed to assume that it is valid on faith?! or am i supposed to connect to another node and ask if the transaction is valid and then trust that other node to tell me the truth? how would you prevent fraud? |

.

.BLACKJACK ♠ FUN. | | | ███▄██████

██████████████▀

████████████

█████████████████

████████████████▄▄

░█████████████▀░▀▀

██████████████████

░██████████████

█████████████████▄

░██████████████▀

████████████

███████████████░██

██████████ | | CRYPTO CASINO &

SPORTS BETTING | | │ | | │ | ▄▄███████▄▄

▄███████████████▄

███████████████████

█████████████████████

███████████████████████

█████████████████████████

█████████████████████████

█████████████████████████

███████████████████████

█████████████████████

███████████████████

▀███████████████▀

███████████████████ | | .

|

|

|

|

bones261

Legendary

Offline Offline

Activity: 1806

Merit: 1827

|

|

November 17, 2018, 06:47:50 AM

Last edit: November 17, 2018, 07:14:17 AM by bones261 |

|

the problem with increasing block size as the only solution for scaling is that no matter how much you increase it, it will not be enough for a global payment system which has to process a lot of transactions per second.

you will eventually need a solution that doesn't need block space for increasing availability. and that is the second layer solution like lightning network.

That's not entirely accurate. 10GB blocks would be sufficient to scale on the level of Visa. However, many nodes would find it hard or impossible to keep up with that kind of capacity. However, in a decade or so, handling that capacity will probably be trivial. The LN network definitely will be less resource intensive. Unfortunately, I do not personally trust the LN in its current state. I find it particularly troubling that I could end up closing a channel in an earlier state due to a system issue on my end. Then end up getting penalized harshly like I was trying to scam someone. Edit: OMG, just did a little more research and found this gem. https://bitcoin.stackexchange.com/questions/58124/how-do-i-restore-a-lightning-node-with-active-channels-that-has-crashed-causing Really? If my node crashes I can loose all of my funds???  That's even worse than my first concern. How long has this been in development? It appears that LN developers have a long way to go before than can make this pig fly.  I'll keep the faith; I guess.  |

|

|

|

|

pooya87

Legendary

Offline Offline

Activity: 3444

Merit: 10558

|

That's not entirely accurate. 10GB blocks would be sufficient to scale on the level of Visa.

no it won't be enough because you can't spam VISA network but you can spam bitcoin blocks and fill them up. besides when it comes to block size it is not just about having more space, you have to consider that you have to download, verify and store 10GB of transaction data every 10 minutes on average and also upload even more depending on how many nodes you connect to and how much you want to contribute. ask yourself this, would you be willing to run or capable of running a full node that requires 1.44 TB disk space per day, an internet speed of at least 20 Mbps and an internet cap of above 3 TB per day? that is 43 TB per month. can your hardware (CPU, RAM) handle verification of that much data. |

.

.BLACKJACK ♠ FUN. | | | ███▄██████

██████████████▀

████████████

█████████████████

████████████████▄▄

░█████████████▀░▀▀

██████████████████

░██████████████

█████████████████▄

░██████████████▀

████████████

███████████████░██

██████████ | | CRYPTO CASINO &

SPORTS BETTING | | │ | | │ | ▄▄███████▄▄

▄███████████████▄

███████████████████

█████████████████████

███████████████████████

█████████████████████████

█████████████████████████

█████████████████████████

███████████████████████

█████████████████████

███████████████████

▀███████████████▀

███████████████████ | | .

|

|

|

|

DooMAD

Legendary

Online Online

Activity: 3780

Merit: 3116

Leave no FUD unchallenged

|

|

November 17, 2018, 07:59:50 AM |

|

its sufficient that in a group of 1000 nodes, 500 save backup of 50% of the data (Type A node) and the other 50% can save backup of the other 50% of the data (Type B node), its a waste of space all the nodes in the network save all information repeated thousands of times.

Then each node Type A can "ask" to other node Type B the information is trying to find and vice-versa and gets the information anyway

Bitcoin was designed to be trustless. The idea of running a node is that you can validate and verify every single transaction yourself. If you run a Type A node, you would have to trust the Type B nodes to do half of the validation for you. If you're going to do that, why not just trust Visa and forget all about Bitcoin? |

.

.HUGE. | | | | | | █▀▀▀▀

█

█

█

█

█

█

█

█

█

█

█

█▄▄▄▄ | ▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀

.

CASINO & SPORTSBOOK

▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄ | ▀▀▀▀█

█

█

█

█

█

█

█

█

█

█

█

▄▄▄▄█ | | |

|

|

|

cfbtcman (OP)

Member

Offline Offline

Activity: 264

Merit: 16

|

|

November 17, 2018, 08:59:55 AM |

|

so lets say there is a transaction Tx1 and it is in the other half of the "data" in node Type B. and say i run node Type A. if someone pays me by spending Tx1, i do not have it in my database (blockchain) so how can i know it is a valid translation? am i supposed to assume that it is valid on faith?! or am i supposed to connect to another node and ask if the transaction is valid and then trust that other node to tell me the truth? how would you prevent fraud?

We ask the other Type B node for the other part of data and we check it, there will be houndred or thousands of nodes Type A and Type B, we could check that agains more then a only one node Type B, but think about a pair of nodes Type A and Type B as a full node, they connect together to have 100% of block data and that can be checksumed and verified, is like reading from our own disk in another cluster. How many more nodes we have more splits we can do and if my Type A node saves 2MB of block information and your Type B saves other 2MB and Type C another 2MB we have 6MB blocks representing only 2MB in each Type node. |

|

|

|

|

odolvlobo

Legendary

Offline Offline

Activity: 4312

Merit: 3214

|

|

November 17, 2018, 09:14:35 AM |

|

We ask the other Type B node for the other part of data and we check it, ...

If A nodes must also download B blocks and B nodes must also download A blocks, then you have accomplished nothing by splitting them. |

Join an anti-signature campaign: Click ignore on the members of signature campaigns.

PGP Fingerprint: 6B6BC26599EC24EF7E29A405EAF050539D0B2925 Signing address: 13GAVJo8YaAuenj6keiEykwxWUZ7jMoSLt

|

|

|

DooMAD

Legendary

Online Online

Activity: 3780

Merit: 3116

Leave no FUD unchallenged

|

|

November 17, 2018, 09:28:20 AM |

|

We ask the other Type B node for the other part of data and we check it, ...

If A nodes must also download B blocks and B nodes must also download A blocks, then you have accomplished nothing by splitting them. I assumed it was meant as a partial SPV setup. Each type of node would be 50% SPV. But yeah, it's not something that most users would be interested in pursuing as a concept. |

.

.HUGE. | | | | | | █▀▀▀▀

█

█

█

█

█

█

█

█

█

█

█

█▄▄▄▄ | ▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀

.

CASINO & SPORTSBOOK

▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄ | ▀▀▀▀█

█

█

█

█

█

█

█

█

█

█

█

▄▄▄▄█ | | |

|

|

|

cfbtcman (OP)

Member

Offline Offline

Activity: 264

Merit: 16

|

|

November 17, 2018, 09:53:52 AM |

|

We ask the other Type B node for the other part of data and we check it, ...

If A nodes must also download B blocks and B nodes must also download A blocks, then you have accomplished nothing by splitting them. I assumed it was meant as a partial SPV setup. Each type of node would be 50% SPV. But yeah, it's not something that most users would be interested in pursuing as a concept. Yeah, something like that, we will ask only the data of the blocks we need and we can test is integrity by checksum or simply by asking the same information to other Type B node randomly, if the result is the same for 2, 3 or 4 other nodes that proves integrity. I cant see other way of shrinking blockchain sizes and this technique is like P2P file sharing, each seed dont need to have all file since there would be sufficient trusted seeds in the network with some % of the file that alltogether have whole information. Its genius, i am Satoshi  |

|

|

|

|

buwaytress

Legendary

Offline Offline

Activity: 2800

Merit: 3446

Join the world-leading crypto sportsbook NOW!

|

|

November 17, 2018, 10:09:00 AM |

|

We ask the other Type B node for the other part of data and we check it, ...

If A nodes must also download B blocks and B nodes must also download A blocks, then you have accomplished nothing by splitting them. I assumed it was meant as a partial SPV setup. Each type of node would be 50% SPV. But yeah, it's not something that most users would be interested in pursuing as a concept. Yeah, something like that, we will ask only the data of the blocks we need and we can test is integrity by checksum or simply by asking the same information to other Type B node randomly, if the result is the same for 2, 3 or 4 other nodes that proves integrity. I cant see other way of shrinking blockchain sizes and this technique is like P2P file sharing, each seed dont need to have all file since there would be sufficient trusted seeds in the network with some % of the file that alltogether have whole information. Its genius, i am Satoshi  Doesn't make sense to me, if the result is the same for 4 nodes but only for particular blocks, that proves only that these nodes share the same on those particular blocks, but unless your node has verified every block once, there's no way to prove integrity by this random checking. And besides, in P2P file sharing, a seed technically is a peer with 100% of the files. You're not seeding until you have the full file, anything less than 100% just makes you a peer  Pruning already works for size concern also? |

|

|

|

cfbtcman (OP)

Member

Offline Offline

Activity: 264

Merit: 16

|

|

November 17, 2018, 10:47:51 AM

Last edit: November 18, 2018, 12:30:25 AM by cfbtcman |

|

We ask the other Type B node for the other part of data and we check it, ...

If A nodes must also download B blocks and B nodes must also download A blocks, then you have accomplished nothing by splitting them. I assumed it was meant as a partial SPV setup. Each type of node would be 50% SPV. But yeah, it's not something that most users would be interested in pursuing as a concept. Yeah, something like that, we will ask only the data of the blocks we need and we can test is integrity by checksum or simply by asking the same information to other Type B node randomly, if the result is the same for 2, 3 or 4 other nodes that proves integrity. I cant see other way of shrinking blockchain sizes and this technique is like P2P file sharing, each seed dont need to have all file since there would be sufficient trusted seeds in the network with some % of the file that alltogether have whole information. Its genius, i am Satoshi  Doesn't make sense to me, if the result is the same for 4 nodes but only for particular blocks, that proves only that these nodes share the same on those particular blocks, but unless your node has verified every block once, there's no way to prove integrity by this random checking. And besides, in P2P file sharing, a seed technically is a peer with 100% of the files. You're not seeding until you have the full file, anything less than 100% just makes you a peer  Pruning already works for size concern also? That particular blocks are the blocks that contain information you are trying to find like the movements for specific address transactions. 4 Types of nodes is not static thing, as the block size increase the number of splited parts increase too, we can have Type A,B,C,D,E,F and so on to keep the size of each block data in only 2MB for each node type. Each Type of node would have 100% of his 50% so could be a seed, but if you want you can call it peer. Look this simple logic, if you have 1 million nodes this moment, you think it would be logic to have 1 million of full nodes running? For me that would be a complete waste of resources and for sure more safety to put some node in the moon! Its like storjcoin service, for sure they calculate a safety limit of backups around all the world to have redundance and dont have more backups than the reasonable. About validation/verification, i think could be done a way to each Type of node validates/verifies his part of data. |

|

|

|

|

bones261

Legendary

Offline Offline

Activity: 1806

Merit: 1827

|

|

November 17, 2018, 04:03:08 PM |

|

That's not entirely accurate. 10GB blocks would be sufficient to scale on the level of Visa.

no it won't be enough because you can't spam VISA network but you can spam bitcoin blocks and fill them up. besides when it comes to block size it is not just about having more space, you have to consider that you have to download, verify and store 10GB of transaction data every 10 minutes on average and also upload even more depending on how many nodes you connect to and how much you want to contribute. ask yourself this, would you be willing to run or capable of running a full node that requires 1.44 TB disk space per day, an internet speed of at least 20 Mbps and an internet cap of above 3 TB per day? that is 43 TB per month. can your hardware (CPU, RAM) handle verification of that much data. First off, it would be nice if you quoted enough of my text to keep it in context... That's not entirely accurate. 10GB blocks would be sufficient to scale on the level of Visa. However, many nodes would find it hard or impossible to keep up with that kind of capacity. However, in a decade or so, handling that capacity will probably be trivial.

I've already acknowledged this isn't practical, right now. However, unless technology has somehow reached it's limits, it may be feasible in a decade or so. Also, in order to combat "spam," miners already have the option to not include transactions below a certain fee. They can make it very expensive for someone to try and spam the network. Also, with bigger blocks, it probably won't be worth the waste of resources for a miner to attempt to drive up the fee market by including spam in their blocks. Furthermore, in another thread, Greg Maxwell himself has acknowledged that a pruned node is a full node. There is really no need for every single full node to also store the entire blockchain, permanently. There are sufficient entities out there like exchanges, large pools, and hardware wallet providers, who would probably want to store the entire blockchain. I think the number of nodes that would run "archival" nodes would be of sufficient number to have the network remain decentralized. I also acknowledge in my original post that a second layer solution will always end up using less resources. However, the current LN carries large risks of someone losing their funds due to either system errors or human error. I'm certainly not going to hang my hat on the LN until the developers and network itself can show results that are acceptable. Right now, I wouldn't store 1 sat in a channel on the Lightning Network. The risk of losing funds is just too high. |

|

|

|

|

cellard

Legendary

Offline Offline

Activity: 1372

Merit: 1252

|

The main problem is that most of these scaling solutions that are being proposed will first require a hardfork. This means we'll have the drama of 2 competing bitcoins trying to claim that they are the real one (see the BCash ABC vs BCash SV ongoing war right now). This will not end well. Without consensus we will just end up with 2 bitcoins which are in sum of lesser value than before the hardfork happened.

Most bitcoin whales don't support any of the proposed scaling solutions so far so your scaling fork will end up dumped by tons of coins.

|

|

|

|

|

bones261

Legendary

Offline Offline

Activity: 1806

Merit: 1827

|

|

November 17, 2018, 04:59:20 PM |

|

The main problem is that most of these scaling solutions that are being proposed will first require a hardfork. This means we'll have the drama of 2 competing bitcoins trying to claim that they are the real one (see the BCash ABC vs BCash SV ongoing war right now). This will not end well. Without consensus we will just end up with 2 bitcoins which are in sum of lesser value than before the hardfork happened.

Most bitcoin whales don't support any of the proposed scaling solutions so far so your scaling fork will end up dumped by tons of coins.

Unlike, BCH, the BTC network already has consensus mechanisms in place that they are willing to use in order to ensure the vast majority of the network is on the same page before proceeding. As demonstrated by the UASF, we can also implement ways to ensure the miners can be persuaded to go along with the wishes of the non-mining users. If someone doesn't want to wait for a high consensus in order to implement their "improvements," they are free to go fork off. That's why we already have hundreds of alt coins right now. As I have already acknowledged, the "bigger block" solution probably won't be practical for at least a decade or so. I have also acknowledged that the second layer solution would probably end up being more efficient with the resources. However, it is nice to know that there is a plan "B" to the scaling solution, just in case the problems with the LN cannot be overcome. |

|

|

|

|

ABCbits

Legendary

Offline Offline

Activity: 2870

Merit: 7491

Crypto Swap Exchange

|

|

November 17, 2018, 05:11:57 PM |

|

Unlike, BCH, the BTC network already has consensus mechanisms in place that they are willing to use in order to ensure the vast majority of the network is on the same page before proceeding. As demonstrated by the UASF, we can also implement ways to ensure the miners can be persuaded to go along with the wishes of the non-mining users. If someone doesn't want to wait for a high consensus in order to implement their "improvements," they are free to go fork off. That's why we already have hundreds of alt coins right now. As I have already acknowledged, the "bigger block" solution probably won't be practical for at least a decade or so. I have also acknowledged that the second layer solution would probably end up being more efficient with the resources. However, it is nice to know that there is a plan "B" to the scaling solution, just in case the problems with the LN cannot be overcome.

Looks like no one remember about transaction compression (reduce transaction size). This is similar scenario with internet scaling in past where people only focus on increasing bandwidth rather than compress the content and compression format such as MP3 solve many problem (including internet scaling a bit). IMO, bitcoin need all of it ( n-layer network, higher block size limit and lower transaction size) to be able to scale without lots of security/decentralization trade-off. |

|

|

|

cellard

Legendary

Offline Offline

Activity: 1372

Merit: 1252

|

|

November 17, 2018, 06:29:16 PM |

|

The main problem is that most of these scaling solutions that are being proposed will first require a hardfork. This means we'll have the drama of 2 competing bitcoins trying to claim that they are the real one (see the BCash ABC vs BCash SV ongoing war right now). This will not end well. Without consensus we will just end up with 2 bitcoins which are in sum of lesser value than before the hardfork happened.

Most bitcoin whales don't support any of the proposed scaling solutions so far so your scaling fork will end up dumped by tons of coins.

Unlike, BCH, the BTC network already has consensus mechanisms in place that they are willing to use in order to ensure the vast majority of the network is on the same page before proceeding. As demonstrated by the UASF, we can also implement ways to ensure the miners can be persuaded to go along with the wishes of the non-mining users. If someone doesn't want to wait for a high consensus in order to implement their "improvements," they are free to go fork off. That's why we already have hundreds of alt coins right now. As I have already acknowledged, the "bigger block" solution probably won't be practical for at least a decade or so. I have also acknowledged that the second layer solution would probably end up being more efficient with the resources. However, it is nice to know that there is a plan "B" to the scaling solution, just in case the problems with the LN cannot be overcome. You can't really know if "the vast majority of the network" is on the same page or not until the D day actually comes. We have already seen miners voting supposed "intention" to support something with their hashrate, then when the day come some of then backpeddle. You would also need all exchanges on board. And ultimately you would need all whales on board, and many of them may not bother to say their opinion at all, then the day of the fork comes and you see an huge dump on your forked coin. You basically need 100% consensus for a hardfork to be a success and not end up with 2 coins, and I don't see how this is even possible ever when a project gets as big as Bitcoin is (I mean it's still small in the grand scheme of things, but open source software development/network effect wise, it's big enough to not be able to ever hardfork seamlessly again. Maybe im wrong and there is a consensus in the future for a hardfork, but again I don't see how. |

|

|

|

|

bones261

Legendary

Offline Offline

Activity: 1806

Merit: 1827

|

You can't really know if "the vast majority of the network" is on the same page or not until the D day actually comes. We have already seen miners voting supposed "intention" to support something with their hashrate, then when the day come some of then backpeddle. You would also need all exchanges on board. And ultimately you would need all whales on board, and many of them may not bother to say their opinion at all, then the day of the fork comes and you see an huge dump on your forked coin.

You basically need 100% consensus for a hardfork to be a success and not end up with 2 coins, and I don't see how this is even possible ever when a project gets as big as Bitcoin is (I mean it's still small in the grand scheme of things, but open source software development/network effect wise, it's big enough to not be able to ever hardfork seamlessly again. Maybe im wrong and there is a consensus in the future for a hardfork, but again I don't see how.

What's this fear of having 2 coins? We already have hundreds of coins, most of them being more or less hard forks off of BTC. The free market will decide which coins persists and which coins go by the wayside. BTC has already demonstrated over and over again that it is the honey badger. If we honestly have faith that BTC is anti-fragile, pesky minority coins are nothing but a mere nuisance. |

|

|

|

|

BitHodler

Legendary

Offline Offline

Activity: 1526

Merit: 1179

|

|

November 17, 2018, 10:44:35 PM |

|

What's this fear of having 2 coins? We already have hundreds of coins, most of them being more or less hard forks off of BTC. The free market will decide which coins persists and which coins go by the wayside. BTC has already demonstrated over and over again that it is the honey badger. If we honestly have faith that BTC is anti-fragile, pesky minority coins are nothing but a mere nuisance.

Absolutely nothing is wrong with that. I would even like to say that it improves scalability potential with how you no longer have to care about another side thinking its own roadmap and implementation is the one to follow. Remember the drama we went through before the Bcash split? They are gone and we no longer have to care about what their plans are, which is the best thing that could happen to Bitcoin. I get it that people want to protect Bitcoin, or that they feel they have to, but if it is the unbreakable powerhouse people say it is, then why worry about what's going to happen? Let the economy do its work. Most forks will fail anyway because they won't be backed by any noteworthy players. There won't be a second Bcash. We've seen the worst. |

BSV is not the real Bcash. Bcash is the real Bcash.

|

|

|

cfbtcman (OP)

Member

Offline Offline

Activity: 264

Merit: 16

|

|

November 18, 2018, 12:42:30 AM |

|

The main problem is that most of these scaling solutions that are being proposed will first require a hardfork. This means we'll have the drama of 2 competing bitcoins trying to claim that they are the real one (see the BCash ABC vs BCash SV ongoing war right now). This will not end well. Without consensus we will just end up with 2 bitcoins which are in sum of lesser value than before the hardfork happened.

Most bitcoin whales don't support any of the proposed scaling solutions so far so your scaling fork will end up dumped by tons of coins.

Hi, i think we dont need to hard-fork for implement something like the Type of nodes A,B,C,D... they could exist at same time as full-nodes, we only need to hard fork after if we want to upgrade block-size or not, because i read something other day about some solution to change block without hard forking. But even Satoshi left the block size limit to can be changed in future and we will really need to change it, even with LN working 100% we will need to change blocksize or else 2MB will not support all transactions ONCHAIN->OFFCHAIN and vice-versa. If we dont do that, that would be dangerous for Layer 1, who would support layer 1 nodes and mining if all the transactions would be done almost 100% in layer 2? Satoshi said that after mining the 21 millions the miners would be supported only by transactions, what transactions if everyone only uses LN? When you want to put money ONCHAIN the miners would ask you maybe $5000 because would be very few transactions and that transactions would need to support layer1 expenses. |

|

|

|

|

cellard

Legendary

Offline Offline

Activity: 1372

Merit: 1252

|

|

November 18, 2018, 03:52:12 PM |

|

You can't really know if "the vast majority of the network" is on the same page or not until the D day actually comes. We have already seen miners voting supposed "intention" to support something with their hashrate, then when the day come some of then backpeddle. You would also need all exchanges on board. And ultimately you would need all whales on board, and many of them may not bother to say their opinion at all, then the day of the fork comes and you see an huge dump on your forked coin.

You basically need 100% consensus for a hardfork to be a success and not end up with 2 coins, and I don't see how this is even possible ever when a project gets as big as Bitcoin is (I mean it's still small in the grand scheme of things, but open source software development/network effect wise, it's big enough to not be able to ever hardfork seamlessly again. Maybe im wrong and there is a consensus in the future for a hardfork, but again I don't see how.

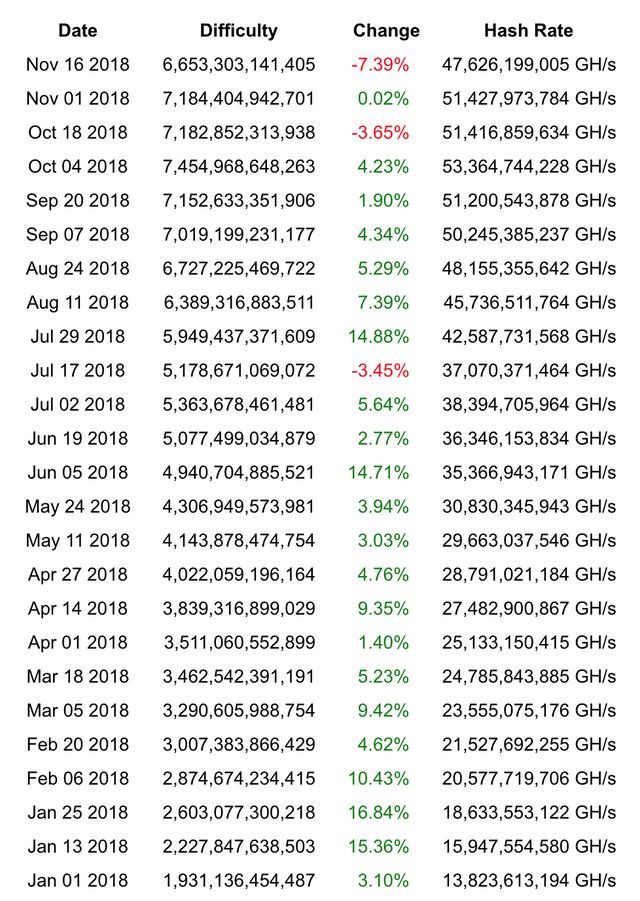

What's this fear of having 2 coins? We already have hundreds of coins, most of them being more or less hard forks off of BTC. The free market will decide which coins persists and which coins go by the wayside. BTC has already demonstrated over and over again that it is the honey badger. If we honestly have faith that BTC is anti-fragile, pesky minority coins are nothing but a mere nuisance. Well, isn't it obvious? Ask to someone with a decent amount at stake in Bitcoin if they want to see say, their 100 BTC, crash in value because someone decided to hardfork with the same hashing algorithm, which means that you will see miners speculating with the hashrate while they can. See the recent dip in hashrate, which is the biggest loss of hashrate on adjustment time of the year:  I guarantee you that Bitcoin holders don't appreciate this bullshit. Not that they pose a systemic risk for Bitcoin, but they are annoying and slowing down the rocket. Anyhow, the real question should be: what's the fear of starting your own altcoin if it's such a good idea, instead of constantly trying to milk 5 minutes of fame for your altcoin by forking it off Bitcoin? If your idea is so good, start it as a an actual altcoin and compete. |

|

|

|

|

DooMAD

Legendary

Online Online

Activity: 3780

Merit: 3116

Leave no FUD unchallenged

|

|

November 18, 2018, 04:10:28 PM |

|

What's this fear of having 2 coins? We already have hundreds of coins, most of them being more or less hard forks off of BTC. The free market will decide which coins persists and which coins go by the wayside. BTC has already demonstrated over and over again that it is the honey badger. If we honestly have faith that BTC is anti-fragile, pesky minority coins are nothing but a mere nuisance.

Well, isn't it obvious? Ask to someone with a decent amount at stake in Bitcoin if they want to see say, their 100 BTC, crash in value because someone decided to hardfork with the same hashing algorithm, which means that you will see miners speculating with the hashrate while they can. See the recent dip in hashrate, which is the biggest loss of hashrate on adjustment time of the year: I guarantee you that Bitcoin holders don't appreciate this bullshit. Not that they pose a systemic risk for Bitcoin, but they are annoying and slowing down the rocket. This sounds like an argument that the feelings of those who hold bitcoin because they're speculating on the price should somehow outweigh the feelings of those who hold Bitcoin because they appreciate the fundamental principles of freedom and permissionlessness. It's an argument for the ages, certainly, but one that neither side will ever back down from. Ideological differences are naturally going to occur and forks are going to happen. Your desire to see higher prices isn't going to prevent that. These are exactly the risks people should consider when they invest if all they care about is the potential profit. Bitcoin is still very much the " Wild West of money". Don't decide to play cowboy if you can't handle some bandits every now and then.  |

.

.HUGE. | | | | | | █▀▀▀▀

█

█

█

█

█

█

█

█

█

█

█

█▄▄▄▄ | ▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀

.

CASINO & SPORTSBOOK

▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄ | ▀▀▀▀█

█

█

█

█

█

█

█

█

█

█

█

▄▄▄▄█ | | |

|

|

|

aliashraf

Legendary

Offline Offline

Activity: 1456

Merit: 1174

Always remember the cause!

|

|

November 18, 2018, 04:46:40 PM

Last edit: November 18, 2018, 06:09:37 PM by aliashraf |

|

I suppose this thread is getting out the rails by falling into the old " To fork or not to fork? This is the question!" story, which cellard is an expert in it  The fact is no matter how and what bitcoin whales want it to be, forks happen and sooner or later we need to have current bitcoin retired as the former legitimate chain and the community should converge on an improved version. You just can't stop evolution from happening, ok? For now, I'm trying to save this thread, rolling back to the original technical discussion and sharing an interesting idea with you guys meanwhile, please focus: so lets say there is a transaction Tx1 and it is in the other half of the "data" in node Type B. and say i run node Type A. if someone pays me by spending Tx1, i do not have it in my database (blockchain) so how can i know it is a valid translation? am i supposed to assume that it is valid on faith?! or am i supposed to connect to another node and ask if the transaction is valid and then trust that other node to tell me the truth? how would you prevent fraud?

What op proposes, as correctly have been categorized by other posters in the thread is a special version of sharding. Although it is an open research field, it is a MUST for bitcoin. Projecting scaling problem to second layer protocols (like LN) is the worst idea because, you can't simulate bitcoin on top of bitcoin as a #2 layer, it is absurd. Going to second layer won't happen unless by giving up about some essential feature of bitcoin or at least being tolerant about centralization and censorship threats, compromising the cause. So, this is it, our destiny, we need an scalable blockchain solution and as of now, we got just sharding. Back to Pooya objection, it occurs when a transaction that is supposed to be processed in a partition/shard is trying to access an unspent output from another shard. I think there may be a workaround for this: Suppose in a sharding based on transaction partitioning that uses a simple mod operation where txid mod N determines the transaction's partition number in an N shards network, we put a constraint on transactions such that wallets are highly de-incentivized/not-allowed to make a transaction from heterogeneous outputs, i.e. outputs from transactions in multiple shards. Now we have this transaction tx1 with its outputs belonging to transactions that are maintained on a same shard, the problem would be to which shard the transaction itself belongs? The trick is adding a nonce field to the transaction format and make the wallet client software to perform like N/2 hashes (a very small amount of work) to find a proper nonce that makes txid mod N such that it fits to the same shard as its output. For coinbase transaction, the same measure should be taken by miners. It looks somewhat scary, being too partitioned but I'm working on it as it looks very promising to me. |

|

|

|

|

cfbtcman (OP)

Member

Offline Offline

Activity: 264

Merit: 16

|

|

November 18, 2018, 11:09:21 PM |

|

Then how about backward compability with older node/client?

1. They wouldn't know that they need to connect to different type of nodes to get and verify all transaction

2. There's no guarantee they will get all transaction/block as there's no guarantee they connect to all different type of nodes

I think its possible to create new bitcoin core version supporting type nodes and emulating a normal node communication with old ones and new TYPE Node communication with new ones, at least with the actual block size, but that would be only experience, because without bigger blocks that is not usefull. |

|

|

|

|

cfbtcman (OP)

Member

Offline Offline

Activity: 264

Merit: 16

|

|

November 18, 2018, 11:21:22 PM |

|

I suppose this thread is getting out the rails by falling into the old " To fork or not to fork? This is the question!" story, which cellard is an expert in it  The fact is no matter how and what bitcoin whales want it to be, forks happen and sooner or later we need to have current bitcoin retired as the former legitimate chain and the community should converge on an improved version. You just can't stop evolution from happening, ok? For now, I'm trying to save this thread, rolling back to the original technical discussion and sharing an interesting idea with you guys meanwhile, please focus: so lets say there is a transaction Tx1 and it is in the other half of the "data" in node Type B. and say i run node Type A. if someone pays me by spending Tx1, i do not have it in my database (blockchain) so how can i know it is a valid translation? am i supposed to assume that it is valid on faith?! or am i supposed to connect to another node and ask if the transaction is valid and then trust that other node to tell me the truth? how would you prevent fraud?

What op proposes, as correctly have been categorized by other posters in the thread is a special version of sharding. Although it is an open research field, it is a MUST for bitcoin. Projecting scaling problem to second layer protocols (like LN) is the worst idea because, you can't simulate bitcoin on top of bitcoin as a #2 layer, it is absurd. Going to second layer won't happen unless by giving up about some essential feature of bitcoin or at least being tolerant about centralization and censorship threats, compromising the cause. So, this is it, our destiny, we need an scalable blockchain solution and as of now, we got just sharding. Back to Pooya objection, it occurs when a transaction that is supposed to be processed in a partition/shard is trying to access an unspent output from another shard. I think there may be a workaround for this: Suppose in a sharding based on transaction partitioning that uses a simple mod operation where txid mod N determines the transaction's partition number in an N shards network, we put a constraint on transactions such that wallets are highly de-incentivized/not-allowed to make a transaction from heterogeneous outputs, i.e. outputs from transactions in multiple shards. Now we have this transaction tx1 with its outputs belonging to transactions that are maintained on a same shard, the problem would be to which shard the transaction itself belongs? The trick is adding a nonce field to the transaction format and make the wallet client software to perform like N/2 hashes (a very small amount of work) to find a proper nonce that makes txid mod N such that it fits to the same shard as its output. For coinbase transaction, the same measure should be taken by miners. It looks somewhat scary, being too partitioned but I'm working on it as it looks very promising to me. Woow, you are the man  |

|

|

|

|

|

mechanikalk

|

|

November 18, 2018, 11:22:27 PM |

|

This has been discussed many times and unfortunately, majority of Bitcoiner would disagree since increasing block size would increase the cost of running full nodes. Split block data to many different nodes type is called Sharding and already proposed many times such as BlockReduce: Scaling Blockchain to human commerceBesides, IMO sharding open lots of attack vector, increase development complexity and requiring more trust. Additionally, LN help bitcoin scaling a lot, even though it's not perfect solution. Those who said that clearly don't understand how LN works and it's potential. Lots of cryptocurrency including Ethereum are preparing 2nd-layer/off-chain as scaling solution because they know it's good scaling solution. If anyone is interested in a simpler understanding of BlockReduce, they can checkout this 30 minute intro that I presented at University of Texas Blockchain Conference. I ultimately don't think it is all that complicated, it is really just multithreading Bitcoin and tying it back together with merge mining. BlockReduce PresentationIn terms of issues with lightning, I think that the biggest problem is the cost of capital. Transaction fees are necessarily going to be non-zero in economic equilibrium because node operators will need to pay for the cost of money, or opportunity cost. Therefore, you would expect to pay ~1-4% annually of a transaction that takes place in a channel that is open for any period of time. It will also need to account for the costs of opening and closing the channel. The only reason that LN kinda works is because people are not fully accounting for the cost of capital needed to run the nodes. |

|

|

|

|

cfbtcman (OP)

Member

Offline Offline

Activity: 264

Merit: 16

|

|

November 19, 2018, 03:23:03 PM

Last edit: November 19, 2018, 06:10:48 PM by cfbtcman |

|

People, What about something like this: https://ibb.co/c249h0Vertical blockchain block scaling. Each block would have the same limit size as now. Possibility to create many "vertical" blocks in each 10 minutes interval when Mempool size is overcharged. Each new layer blockchain would be part of a new layer node so we could have infinite Layer Type Nodes That Layer Type nodes will include less repeated data, so we save disk space, if we have 1 million nodes we dont need to save all information in the same nodes, thats a waste, so when someone wants to install a node, the node install program will sugest the best Layer Type node to install, the one the system needs more. The Layer Type nodes exchange information between them, something like pruned nodes. This way we prevent download and upload of big quantities of data like it happens with BCH with huge block sizes. All the Layer Type Nodes share information they need that can be tested with the information that Layer Type Node already have, everything is hashed with everything. |

|

|

|

|

|

mechanikalk

|

|

November 19, 2018, 04:51:24 PM |

|

People, What about something like this: https://ibb.co/c249h0Vertical blockchain block scaling. Each block would have the same limit size as now. Possibility to create many "vertical" blocks in each 10 minutes interval when Mempool size is overcharged. Each new layer blockchain would be part of a new layer node so we could have infinite Layer Type Nodes That Layer Type nodes will include less repeated data, so we save disk space, if we have 1 million nodes we dont need to save all information in the same nodes, thats a waste, so when someone wants to install a node, the node install program will sugest the best Layer Type node to install, the one the system needs more and the Layer Type nodes exchange information, something like pruned nodes. This way we prevent download and upload of big quantities of data like it happens with BCH with huge block sizes by block. All the Layer Type Nodes share information they need that can be tested with the information that Layer Type Node already have, everything is hashed with everything. The issue with your proposal above is that you do not know which transactions to include in which vertical block. You will not know which transactions take precedence and will introduce a mechanism by which a double spend can occur because a conflicting transaction could be put into two blocks at once. However, I do think you are thinking in the right direction. You should check out BlockReduce, which is similar to this idea, but moves transactions in a PoW managed hierarchy to insure consistency of state. If the manuscript is a bit long, please also check out a presentation I did at University of Texas Blockchain conference. |

|

|

|

|

Anti-Cen

Member

Offline Offline

Activity: 210

Merit: 26

High fees = low BTC price

|

|

November 21, 2018, 11:41:56 AM |

|

"Lightning network" == Mini banks

I did warn you all and no the so called new "Off-Block" hubs did not save BTC as we can see from the price.

CPU-Wars, mere 9 transactions per second from 20,000 miners and fees hitting $55 per transaction is what

the BTC code will be remembered for as it enters our history books just like Tulip Mania did in the 1700's

Casino managers are not the best people in the world to take financial advise from and the same goes for the

dis-information moderator here that keeps pressing the delete button here because he hates the truth being exposed.

|

Mining is CPU-wars and Intel, AMD like it nearly as much as big oil likes miners wasting electricity. Is this what mankind has come too.

|

|

|

HeRetiK

Legendary

Offline Offline

Activity: 2926

Merit: 2091

Cashback 15%

|

|

November 21, 2018, 12:33:14 PM |

|

"Lightning network" == Mini banks They're not. I did warn you all and no the so called new "Off-Block" hubs did not save BTC as we can see from the price

Caring about short-term fluctuations are usually a sign of a lack of long-term thinking. CPU-Wars, mere 9 transactions per second from 20,000 miners and fees hitting $55 per transaction is what

the BTC code will be remembered for as it enters our history books just like Tulip Mania did in the 1700's Irrelevant to the discussion. Casino managers are not the best people in the world to take financial advise from and the same goes for the

dis-information moderator here that keeps pressing the delete button here because he hates the truth being exposed.

See above, which may also be the reason why some of these posts got deleted, rather than a hidden conspiracy by big crypto. |

.

.HUGE. | | | | | | █▀▀▀▀

█

█

█

█

█

█

█

█

█

█

█

█▄▄▄▄ | ▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀

.

CASINO & SPORTSBOOK

▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄ | ▀▀▀▀█

█

█

█

█

█

█

█

█

█

█

█

▄▄▄▄█ | | |

|

|

|

DooMAD

Legendary

Online Online

Activity: 3780

Merit: 3116

Leave no FUD unchallenged

|

|

November 21, 2018, 01:57:44 PM |

|

<snip>

Off topic, but I thought RNC and all related accounts were banned? I did have a link, but copying it on a phone is a ballache. //EDIT: https://bitcointalk.org/index.php?topic=2617240.msg31377296#msg31377296RNC admitted to ban evasion and being Anti-Cen there. |

.

.HUGE. | | | | | | █▀▀▀▀

█

█

█

█

█

█

█

█

█

█

█

█▄▄▄▄ | ▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀

.

CASINO & SPORTSBOOK

▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄ | ▀▀▀▀█

█

█

█

█

█

█

█

█

█

█

█

▄▄▄▄█ | | |

|

|

|

cfbtcman (OP)

Member

Offline Offline

Activity: 264

Merit: 16

|

|

November 21, 2018, 09:46:36 PM

Last edit: November 22, 2018, 12:36:58 AM by cfbtcman |

|

"Lightning network" == Mini banks

I did warn you all and no the so called new "Off-Block" hubs did not save BTC as we can see from the price.

CPU-Wars, mere 9 transactions per second from 20,000 miners and fees hitting $55 per transaction is what

the BTC code will be remembered for as it enters our history books just like Tulip Mania did in the 1700's

Casino managers are not the best people in the world to take financial advise from and the same goes for the

dis-information moderator here that keeps pressing the delete button here because he hates the truth being exposed.

The exchangers are the mini banks yet, maybe worst than Lightning Network, LN is like you put your salary of 1 month and after that you will pay you expenses and you receive back and waste and earn, always the same money, if you loose money you will lose not so much, the actual exchanges are worst, MtGox, Btc-e, Wex, etc they are stealing millions and nobody cares. Maybe BTC its a little complicated for common citizen and we need that "mini or big banks" to work together. |

|

|

|

|

Wind_FURY

Legendary

Offline Offline

Activity: 2912

Merit: 1825

|

|

November 22, 2018, 08:42:28 AM |

|

I would be very curious what Bitcoin's network topology will look like if by some miraculous event, Bitcoin hard forks to bigger blocks and sharding, with consensus. Haha.

Plus big blocks are inherently centralizing the bigger they go. Wouldn't sharding only prolong the issue, and not solve it?

|

| .SHUFFLE.COM.. | ███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████ | ███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████ | .

...Next Generation Crypto Casino... |

|

|

|

aliashraf

Legendary

Offline Offline

Activity: 1456

Merit: 1174

Always remember the cause!

|

|

November 22, 2018, 09:30:15 AM |

|

I would be very curious what Bitcoin's network topology will look like if by some miraculous event, Bitcoin hard forks to bigger blocks and sharding, with consensus. Haha.

Plus big blocks are inherently centralizing the bigger they go. Wouldn't sharding only prolong the issue, and not solve it?

Op's proposal is a multilevel hierarchical sharding schema in which bigger blocks are handled in the top level. As I have debated it extensively above thread there are a lot of issues remained unsolved but I think we need to take every sharding idea as a serious one and figure out a solution for scaling problems eventually, hence hierarchical schemas are promising enough to be discussed and improved, imo. |

|

|

|

|

greg458

Jr. Member

Offline Offline

Activity: 336

Merit: 2

|

|

November 22, 2018, 10:22:41 PM |

|

The main problem is that most of these scaling solutions that are being proposed will first require a hardfork. This means we'll have the drama of 2 competing bitcoins trying to claim that they are the real one (see the BCash ABC vs BCash SV ongoing war right now). This will not end well. Without consensus we will just end up with 2 bitcoins which are in sum of lesser value than before the hardfork happened.

Most bitcoin whales don't support any of the proposed scaling solutions so far so your scaling fork will end up dumped by tons of coins.

|

|

|

|

|

Wind_FURY

Legendary

Offline Offline

Activity: 2912

Merit: 1825

|

|

November 23, 2018, 06:11:31 AM |

|

I would be very curious what Bitcoin's network topology will look like if by some miraculous event, Bitcoin hard forks to bigger blocks and sharding, with consensus. Haha.

Plus big blocks are inherently centralizing the bigger they go. Wouldn't sharding only prolong the issue, and not solve it?

Op's proposal is a multilevel hierarchical sharding schema in which bigger blocks are handled in the top level. Ok, but big blocks are inherently centralizing the bigger they go, are they not? Sharding would only prolong the issue on the network, any blockchain network, of scaling in.

As I have debated it extensively above thread there are a lot of issues remained unsolved but I think we need to take every sharding idea as a serious one and figure out a solution for scaling problems eventually, hence hierarchical schemas are promising enough to be discussed and improved, imo.

For Bitcoin? I believe it would be better proposed in a network that has big blocks, and does not have firm restrictions on hard forks. Bitcoin Cash ABC. |

| .SHUFFLE.COM.. | ███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████ | ███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████

███████████████████████ | .

...Next Generation Crypto Casino... |

|

|

|

franky1

Legendary

Offline Offline

Activity: 4214

Merit: 4475

|

|

November 23, 2018, 01:18:15 PM

Last edit: November 24, 2018, 09:29:01 AM by franky1 Merited by bones261 (2), ABCbits (1) |

|

its sufficient that in a group of 1000 nodes, 500 save backup of 50% of the data (Type A node) and the other 50% can save backup of the other 50% of the data (Type B node), its a waste of space all the nodes in the network save all information repeated thousands of times.

Then each node Type A can "ask" to other node Type B the information is trying to find and vice-versa and gets the information anyway

Bitcoin was designed to be trustless. The idea of running a node is that you can validate and verify every single transaction yourself. If you run a Type A node, you would have to trust the Type B nodes to do half of the validation for you. If you're going to do that, why not just trust Visa and forget all about Bitcoin? finally doomad sees the light about "compatibility not = full node".. and how "compatible" is not good for the network.. one merit earned... may he accept the merit and drop that social drama debate now he seen the light. onto the topic the hard fork of removing full nodes that can only accept 1mb blocks has been done already, in mid 2017. reference the "compatible" nodes still on the network are not full nodes no more. all is required is to remove the "witness scale factor" and the full 4mb can be utilised by legacy transactions AND segwit transactions. this too can have positives by removing alot of wishy washy lines of code too and bring things back inline with a code base that resembled pre segwit block structuring to rsemble a single block structure where everything is together that doesnt need to be stripped/"compatible". yes the "compatible" nodes would stall out and not add blocks to their hard drive. but these nodes are not full nodes anyway so people using them might aswell just use litewallets and bloom filter transaction information they NEED for personal use. we will then have the network able to actually handle more tx/s at a 4x level rather than a 1.3-2.5x level(which current segwit blockstructure LIMITS (yep even with 4mb suggested weight. actual calculations limit it to 2.5x compared to legacy 1mb)) ... as for how to scale onchain. please do not throw out "LN is the solution" or "servers will be needed" or "you cant buy coffee" 1. instead of needing LN for coffee by channelling to starbucks. just onchain use a tx to btc buy a $40 starbucks giftcard once a fortnight. after all from a non technical real life utility perception of average joe. if your LN locking funds with starbucks for a fortnight anyway. its the same 'feel' as just pre-buying a fortnights worth of coffee via a giftcard. (it also solves the routing, multiple channel requirement for good connection, spendability, also the other problems LN has) 2. onchain scaling is not about just raising the blocksize. its also about reducing the sigops limit so that someone cant bloat/maliciously fill a block with just 5 transactions to use up the limits. EG blocksigops=80k and txsigops=16k meaning 5 tx's can fill a block should they wish. this is by far a bad thing to let continue to be allowed as a network rule.* 3. point 2 had been allowed to let exchanges batch transactions into single transactions of more in/outputs so that exchanges could get cheaper fee's. yet if an exchange is being allowed to bloat a block alone. then that exchange should be paying more for that privelige, not less. (this stubbornly opens up the debate of should bitcoins blockchain be only used by reserve hoarders of multiple users in permissioned services(exchanges/ln factories).. or should the network be allowing individuals wanting permissionless transacting).. in my view permissioned services should be charged more than an individual 4. and as we move away from centralised exchanges that hoard coins we will have less need for xchanges to batch such huge transactions and so there will be less need of such bloated transactions 5. scaling onchain is not just about raising the blocksize. its about making it more expensive for users who transact more often than those who transact less frequently. EG imagine a person spend funds to himself every block. and was doing it via 2000 separate transactions a block (spam attack) he is punishing EVERYONE else. as others that only spends once a month are finding that the fee is higher, even though they have not done nothing wrong. the blocks are still only collating the same 2000tx average. so from a technical prospective are not causing any more 'processing cost' to mining pool nodes tx's into block collation mechanism. (they still only collate ~2000tx so no cost difference) so why is the whole network being punished. due to one persons spam. the person spending every block should pay more for spending funds that have less confirms than others. in short the more confirms your UTXO has the cheaper the transactions get. that way spammers are punished more. this can go a stage further that the child fee also increases not just on how young the parent is but also the grandparent in short bring back a fee priority mechanism. but one that concentrates on age of utxo rather than value of utxo(which old one was) |

I DO NOT TRADE OR ACT AS ESCROW ON THIS FORUM EVER.

Please do your own research & respect what is written here as both opinion & information gleaned from experience. many people replying with insults but no on-topic content substance, automatically are 'facepalmed' and yawned at

|

|

|

DooMAD

Legendary

Online Online

Activity: 3780

Merit: 3116

Leave no FUD unchallenged

|

|

November 23, 2018, 02:11:55 PM |

|

5. scaling onchain is not just about raising the blocksize. its about making it more expensive for users who transact more often than those who transact less frequently.

EG imagine a person spend funds to himself every block. and was doing it via 2000 separate transactions a block (spam attack)

he is punishing EVERYONE else. as others that only spends once a month are finding that the fee is higher, even though they have not done nothing wrong.

the blocks are still only collating the same 2000tx average. so from a technical prospective are not causing any more 'processing cost' to mining pool nodes tx's into block collation mechanism. (they still only collate ~2000tx so no cost difference)

so why is the whole network being punished. due to one persons spam.

the person spending every block should pay more for spending funds that have less confirms than others. in short the more confirms your UTXO has the cheaper the transactions get. that way spammers are punished more.

this can go a stage further that the child fee also increases not just on how young the parent is but also the grandparent

in short bring back a fee priority mechanism. but one that concentrates on age of utxo rather than value of utxo(which old one was)

If you just stuck to raising points like this, rather than simply attacking everything that others are trying to build, I wouldn't have to spend so much time arguing with you. This is one of those rare cases where we actually agree on something. My only minor critique with this post is that you did a much better job of explaining this concept here: imagine that we decided its acceptable that people should have a way to get priority if they have a lean tx and signal that they only want to spend funds once a day. where if they want to spend more often costs rise, if they want bloated tx, costs rise.. which then allows those that just pay their rent once a month or buys groceries every couple days to be ok using onchain bitcoin.. and where the costs of trying to spam the network (every block) becomes expensive where by they would be better off using LN. (for things like faucet raiding every 5-10 minutes) so lets think about a priority fee thats not about rich vs poor but about respend spam and bloat. lets imagine we actually use the tx age combined with CLTV to signal the network that a user is willing to add some maturity time if their tx age is under a day, to signal they want it confirmed but allowing themselves to be locked out of spending for an average of 24 hours. and where the bloat of the tx vs the blocksize has some impact too... rather than the old formulae with was more about the value of the tx  as you can see its not about tx value. its about bloat and age. this way those not wanting to spend more than once a day and dont bloat the blocks get preferential treatment onchain. if you are willing to wait a day but your taking up 1% of the blockspace. you pay moreif you want to be a spammer spending every block. you pay the priceand if you want to be a total ass-hat and be both bloated and respending often you pay the ultimate priceI've yet to hear any technical arguments from anyone as to why this isn't a good idea and something we should be seriously looking at. In fact, I'd even suggest you start a new topic in Development & Technical Discussion just for this point alone. |

.

.HUGE. | | | | | | █▀▀▀▀

█

█

█

█

█

█

█

█

█

█

█

█▄▄▄▄ | ▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀

.

CASINO & SPORTSBOOK

▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄ | ▀▀▀▀█

█

█

█

█

█

█

█

█

█

█

█

▄▄▄▄█ | | |

|

|

|

franky1

Legendary

Offline Offline

Activity: 4214

Merit: 4475

|

|

November 23, 2018, 02:59:46 PM

Last edit: November 23, 2018, 03:11:26 PM by franky1 |

|

If you just stuck to raising points like this, rather than simply attacking everything that others are trying to build, I wouldn't have to spend so much time arguing with you. This is one of those rare cases where we actually agree on something.

i raise many points many times. the thing is, you meander in when the 4 letter word that a center of an apple is called is mentioned. thus you only see that point. its like if i mentioned "you never see a blue lambo on the road". suddenly you start looking out for it and start only noticing blue lambo's and reacting/get emotional to it everytime you see one. not noticing the lack of observation and lack of emotion of other vehicles that said the 4 letter word you defend is actually the ones that do type the code and do release the code because all others have been pushed off the network or pushed out the relay layer into the downstream "compatibility" layer of the network topology. so just running nodes wont change the rules. just mining hashpower wont change the rules. we need actual devs to code rules. which goes against your perception of what devs should be doing. which is what we disagree with. devs should be listening to the community. again whats the point of me posting something about a rule change like the second part of your reply. if your side feels that devs should not listen to the community and just do whatever they please. do you atleast see my point that the network should not have a power house that ignores the community, simply because it doesnt fit "their" roadmap but seeing as your on their side how about just forwarding the second part of your post to them. you can even say its your idea if it helps. i have thrown many idea's out and let anyone take them and use it as their own idea. like i said a few times im not writing code as a authoritarian demanding people follow me. i never have. i just inform people what could/should be done and hope it wakes people up to see there are other options than just the 4 letter word's roadmap, and that we should not just blindly sheep follow a roadmap as if its the only way |

I DO NOT TRADE OR ACT AS ESCROW ON THIS FORUM EVER.

Please do your own research & respect what is written here as both opinion & information gleaned from experience. many people replying with insults but no on-topic content substance, automatically are 'facepalmed' and yawned at

|

|

|

DooMAD

Legendary

Online Online

Activity: 3780

Merit: 3116

Leave no FUD unchallenged

|

|

November 23, 2018, 03:50:52 PM |

|

we need actual devs to code rules. which goes against your perception of what devs should be doing. which is what we disagree with.

devs should be listening to the community.

again whats the point of me posting something about a rule change like the second part of your reply. if your side feels that devs should not listen to the community and just do whatever they please.

do you atleast see my point that the network should not have a power house that ignores the community, simply because it doesnt fit "their" roadmap